Get Unlimited Access to IPinfo Lite

Start using accurate IP data for cybersecurity, compliance, and personalization—no limits, no cost.

Sign up for freeAdTech has a data quality problem that often stays hidden in plain sight.

Many platforms continue to rely on consensus-based IP intelligence: data that appears consistent across vendors but lacks continuous validation against real internet behavior. As performance pressure increases across advertising and retail media, the limitations of this model are becoming harder to ignore in production environments.

For teams responsible for traffic quality, fraud prevention, and geographic targeting, the way consensus breaks down has real operational consequences. I’ve heard about them from these teams myself, and this is what I’ve learned.

What Consensus IP Data Actually Means

Consensus-based IP data emerges when providers align their outputs to reduce discrepancies across the ecosystem. Rather than independently verifying how the internet actually behaves, multiple vendors converge on shared answers, sometimes because they draw from the same upstream sources, and sometimes because alignment itself becomes the goal.

Historically, this approach offered real advantages. Fewer vendor disputes. Cleaner reconciliation across partners. Easier ecosystem standardization. When two providers agree on a location, nobody asks difficult questions.

But consensus models optimize for agreement, not accuracy. They don't inherently verify whether the shared answer reflects current internet reality. And as network infrastructure evolves, with IPv6 adoption accelerating, CGNAT deployments expanding, and adversaries actively manipulating signals, the gap between agreed-upon and correct is widening.

Estimate Your Invalid Traffic Spend

Use the Traffic Leak Audit to estimate how much of your ad spend may be reaching anonymized infrastructure.

In conversations with AdTech platforms across the ecosystem, we hear a consistent pattern: some companies choose their IP data provider not because it's the most accurate, but because it's what everyone else uses. The logic is straightforward: if there's a discrepancy and you're using the same vendor as the rest of the chain, you won't get blamed. It's a "nobody gets fired for buying IBM" dynamic applied to geolocation.

The industry is starting to question this. The conversation is shifting from "does this vendor agree with others?" to "can this data actually be trusted and validated?"

Where AdTech Teams Feel the Impact

When IP intelligence drifts from real-world infrastructure behavior, the effects ripple across multiple workflows and across every layer of the programmatic stack.

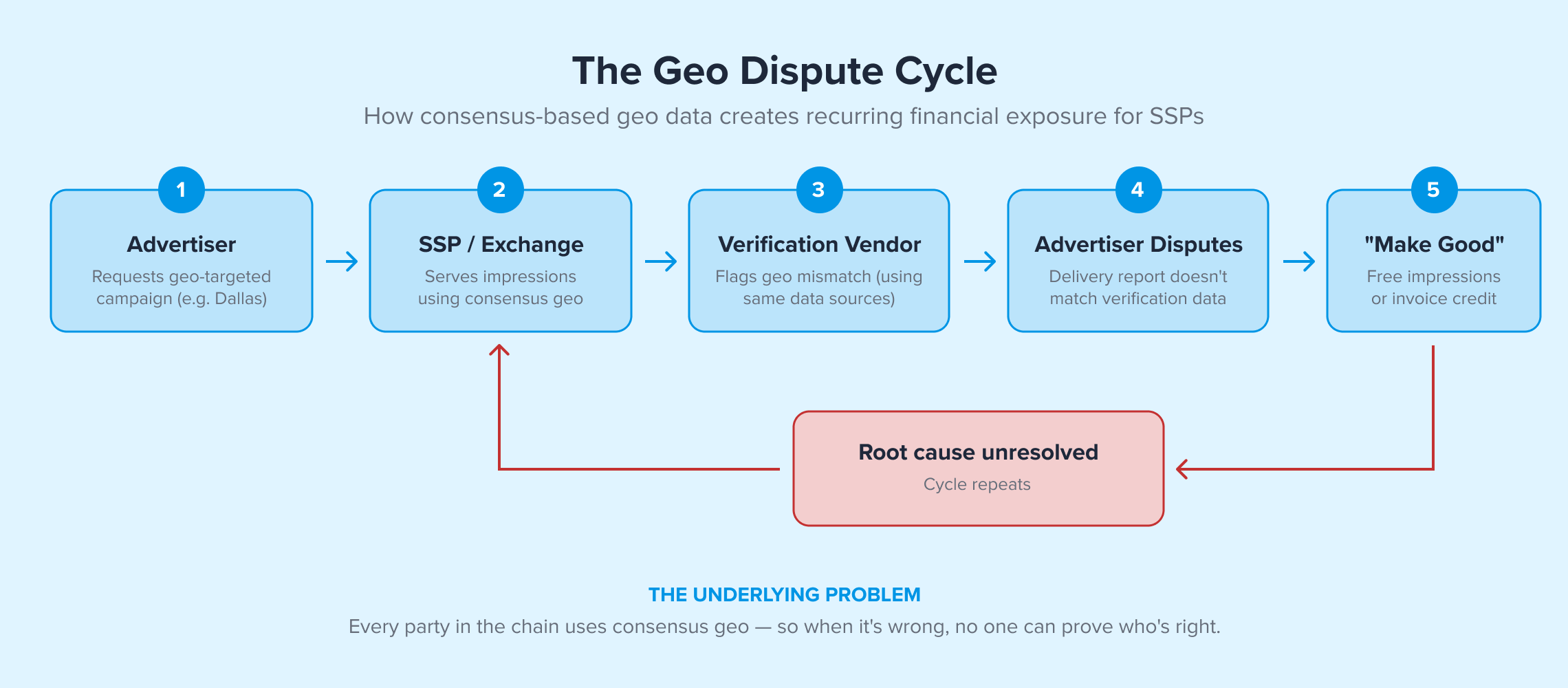

Geographic Mistargeting and the "Make Good" Cycle

City- and region-level inaccuracies don't always surface as obvious errors. They show up as gradual performance degradation: budgets allocated to the wrong DMAs, regional campaigns delivering outside their intended footprint, personalization logic making decisions on stale location signals.

We've spoken with SSPs that report geo mismatch rates as high as 50–70% on certain campaigns. The downstream consequences follow a predictable pattern: advertisers flag delivery reports that don't match their verification data, publishers push back, and the platform absorbs the cost through "make good" compensations: free impression allocations, or in severe cases, invoice cancellations. One global ad exchange described it as an ongoing financial exposure they've simply learned to absorb rather than resolve, because the root cause sits in the data layer everyone shares.

Native ad platforms expanding into new markets face a different version of the same problem. One platform told us they needed to deliver 100,000 daily geo-targeted impressions but could only locate roughly half of their traffic to the correct area, directly limiting campaign pacing and revenue.

Traffic Quality and the Verification Gap

Geo accuracy and traffic quality are deeply connected. When IP geolocation is wrong, it distorts the signals that verification and fraud detection systems depend on to classify traffic. An impression flagged as "out-of-geo" might actually be correctly located, or it might be genuinely invalid traffic that slipped through because the underlying IP classification was stale.

One mobile ad platform reported that 25% of their IP traffic returns no usable geo data from their current provider and the rate is increasing. That's not a fraud problem per se; it's a classification gap that makes fraud harder to distinguish from legitimate traffic.

Optimization Blind Spots

Modern bidding and optimization systems depend on clean input signals. When IP intelligence contains unverified assumptions about location, network type, or connection environment, downstream systems inherit that uncertainty at scale.

This affects bid efficiency, audience modeling, supply-path optimization, and retail media attribution. In high-volume programmatic environments processing billions of bid requests monthly, even small signal drift compounds quickly. A DSP making pre-bid filtering decisions on stale hosting classifications will either over-block legitimate inventory or under-filter fraudulent traffic, both of which eat margin.

Why Measurement-Backed IP Intelligence Performs Differently

The alternative to consensus is a different methodology.

Evidence-based IP intelligence starts with direct internet measurement rather than inference or self-reported registration data. ProbeNet, IPinfo’s internet measurement platform, continuously observes how traffic actually moves across global networks, conducting tens of billions of measurements weekly from more than 1,300 points of presence worldwide. This means IP classifications are collated, categorized, and verified against live network behavior, not derived from what vendors collectively assume.

For AdTech teams, this distinction translates into concrete operational differences. Geographic precision becomes more stable because it's tied to measured latency and routing paths, not registration databases that may be months or years out of date. IVT detection inputs gain higher fidelity because hosting, proxy, and VPN classifications reflect current infrastructure behavior. Accuracy radius transparency lets platforms understand the confidence level of each geolocation result rather than treating every answer as equally certain.

Perhaps most critically for the consensus problem: when a measurement-backed answer disagrees with a consensus-based one, there's an evidence trail. Teams can audit why a classification was made, defend it during advertiser disputes, and demonstrate that their data reflects what the internet is actually doing, not just what other vendors agree on.

This matters because the ecosystem currently has no independent ground truth for IP geolocation. When vendors disagree, there's no authoritative reference, just competing claims. Measurement-based methodology offers an answer that can be explained, tested, and defended.

CMT recently adopted our data for that reason.

The Direction the AdTech Ecosystem Is Heading

Across AdTech, retail media, and fraud-sensitive acquisition channels, the bar for data quality is rising. Privacy regulation is tightening and cookies are disappearing. As these traditional targeting signals fade, IP context becomes more foundational, which makes the quality of that context more consequential.

Teams are increasingly asking harder questions of their data infrastructure: Can this signal be validated independently? Does it reflect current infrastructure behavior, or a snapshot from months ago? Will it adapt as the internet changes, as IPv6 grows, as new proxy networks emerge, as fraud tactics evolve?

Consensus once provided operational convenience in an ecosystem that valued alignment above all else. But the industry has evolved and expectations have changed. The bar has moved and there's a better way to do that now.

Platforms that ground their data foundations in measured internet reality, rather than vendor agreement, position themselves to optimize more confidently, defend performance outcomes more effectively, and adapt faster as the ecosystem continues to shift.

The more useful question for any platform to ask about their IP data is simply: is it actually right and what is the evidence?

About the author

As the product marketing manager, Fernanda helps customers better understand how IPinfo products can serve their needs.